Professor Michelle Yvonne Simmons was named Australian of the Year in 2018 partly for her work in quantum computing. Her team has developed the world's first transistor made from a single atom. But what is quantum computing?

Today’s average computer has a lot of circuits that do loads of basic calculations like additions and multiplications very quickly.

Computers work by using information that is stored as combinations of electrical or magnetic impulses. The base unit of this information is called a BIT and it can hold one value: either on (stored as one), or off, (stored as zero).

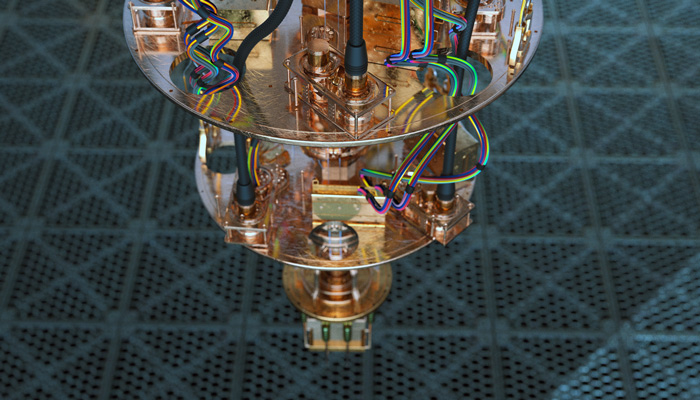

State of the art: A section of a quantum computer.

In this way, our computers combine billions of zeroes and ones to store data, perform calculations and exchange information, using electricity and magnetic fields which follow the laws of classical physics.

A quantum computer does all the things that a standard computer does, but it uses the laws of quantum mechanics. Under these laws, a bit doesn't have definite values of just zero or one – it’s a qubit that can hold a whole range of different values.

That opens up the calculations that computers can perform by a huge amount. Quantum computers can calculate on all the inputs to a problem in parallel. This on its own isn’t enough because you still only get one output, like a random string of zeros and ones, when you measure. But for some problems, you can cleverly program your quantum computer to cancel out all the wrong strings and reinforce the right ones so you are almost certain to get out the correct answer at the end. For some problems like cracking codes, the speed-up means what would take years on our fastest supercomputers, would now take just minutes to solve.

But the unusual laws of quantum mechanics only reveal themselves at very small sizes like single atoms, or single photons of light.

At the moment, quantum computers only work at very low temperatures. They must use state-of-the-art superconductors and very precise engineering, like tiny antennas and electron tunnelling units, to tune circuits that are invisible to the human eye.

Macquarie’s Quantum Materials and Applications Group last year caused a nanodiamond – one thousandth of a human hair in diameter – to shine at 'super-radiant' levels at room temperature. This has important implications for the future of quantum computing; it may mean these computers don’t have to operate in sub-zero temperatures.

But we are still a long way off developing a quantum computer for everyday use.

There’s now huge interest in quantum computing; it has shifted from an interesting research question to something that attracts very serious, large-scale industrial investment – which is what it will take to introduce the paradigm shift required for quantum computing to take off.

Large groups like IBM, Google, Intel, and Microsoft are now investing in this research. Now, we think we might be a decade away from seeing quantum computers proliferate. If they do, they could be a threat to online credit card transactions and cryptocurrencies, whose security is based on the fact that the calculations to break their code are very hard to do on a machine.

On the other hand, it would be a boon to designing new pharmaceuticals, and sorting through big data sets. What’s most exciting are the possibilities we haven’t even thought of yet, just as the inventors of computers in the 1940s didn't anticipate we could use it in our hands to navigate by Googlemaps.

In the late 1990s and early 2000s, many sceptics of quantum computing didn't think it would happen. But where the research is at now, there’s been some very precise experimental demonstrations, so most people now agree that we will see quantum computers in widespread use one day; it’s just going to take a lot of time and money.